The AI Agent Production Gap Analysis: Why Most Projects Never Reach Deployment

May 4, 2026

There is no shortage of impressive AI agent demos. In boardrooms, at technology conferences, and across enterprise innovation labs, AI agents are performing tasks that once required entire teams, such as scheduling workflows, processing documents, routing customer queries, and even writing and reviewing code.

The demos are compelling. The pilots are promising. And yet, for the vast majority of organizations, that is exactly where the journey ends.

A March 2026 survey of 650 enterprise technology leaders found that 78% of enterprises have at least one AI agent pilot running, but only 14% have successfully scaled an agent to organization-wide operational deployment. This is not a minor gap. It is a structural failure, one that is costing businesses time, capital, and competitive ground.

The question is no longer whether AI agents work. The models are capable, the tooling has improved, and the business case is well established. The real question is why, despite record investment and near-universal experimentation, so few organizations can cross the line from proof-of-concept to production.

We at AIChief answer that question precisely, drawing on research from McKinsey, IBM, Deloitte, Forbes, Gartner, and IDC, and outlining what enterprises must do to close the gap.

The Scale of the Problem: By the Numbers

Most enterprises are experimenting with AI, but very few are scaling it successfully. Before examining the causes, the data demands attention. The production gap is not a fringe problem affecting a handful of unprepared companies. It is the defining challenge of enterprise AI in 2026.

A few AI reports are stated below:

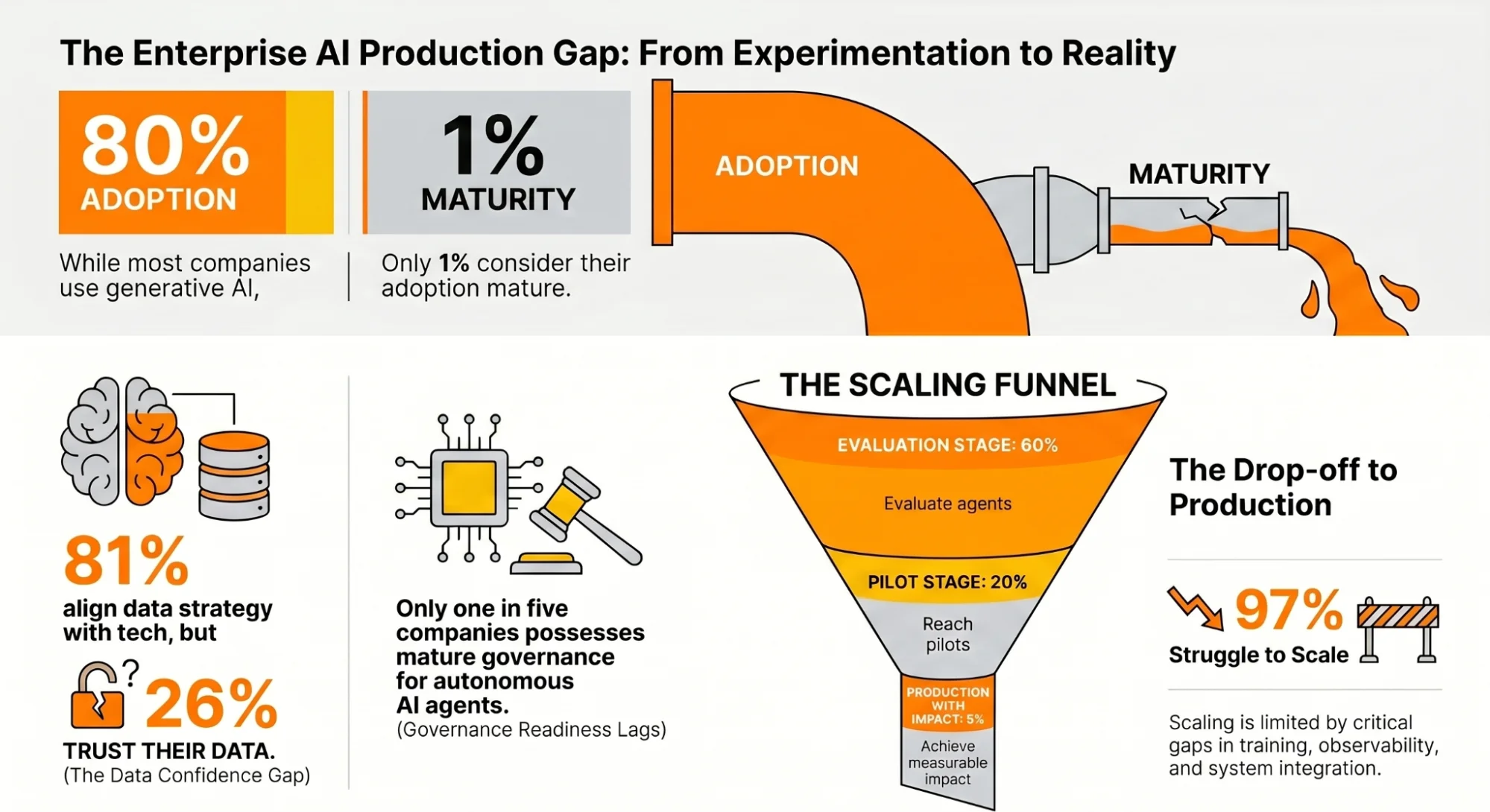

- McKinsey’s 2025 State of AI report shows that nearly 80% of companies have adopted generative AI, yet a similar share reports no material impact on earnings, the “gen AI paradox” . Only 1% consider their AI adoption mature.

- IDC research (AWS) finds that 97% of enterprises have not figured out how to scale AI agents, limited by gaps in training, observability, and integration.

- IBM’s Institute for Business Value reports that while 81% of organizations align data strategy with technology, only 26% believe their data can support AI-driven revenue.

- Deloitte’s 2026 report shows that just one in five companies has mature governance for autonomous AI agents.

- As John Pettit noted in the Forbes Technology Council, the challenge in 2026 is no longer adoption, but proving measurable value as governance complexity rises.

Across surveys, adoption drops sharply: 60% evaluate AI agents, 20% reach pilots, and only 5% achieve production with measurable impact.

These numbers reveal a consistent, systemic pattern. The gap is not in ambition, investment, or access to technology. It is in operational readiness, organizational structure, data infrastructure, and governance.

What Is the AI Agent Production Gap?

The AI agent production gap refers to the widening distance between the number of enterprises experimenting with AI agents and the number that have successfully integrated them into live, reliable, business-critical workflows.

Unlike traditional software deployments , AI agents introduce a fundamentally different class of operational challenges.

As McKinsey notes, agents represent a shift from reactive tools to autonomous , goal-driven systems.

- Messy, fragmented data

- Unpredictable edge cases

- Regulatory constraints

- Real consequences for failure

As Bernard Marr, a regular Forbes contributor and world-renowned technology advisor, notes, AI agents are no longer just answering questions or generating content; they are carrying out complex, multi-step processes and interfacing with third-party services.

Think of them as virtual co-workers that are always on, monitoring and adjusting processes in real time. That shift from assistant to actor is precisely what makes production deployment so much harder than the demo suggests. The gap is not technological. It is organizational.

The Five Root Causes of Deployment Failure

Research from multiple authoritative sources converges on five core reasons why AI agent projects stall before reaching production. Let’s have a look at them:

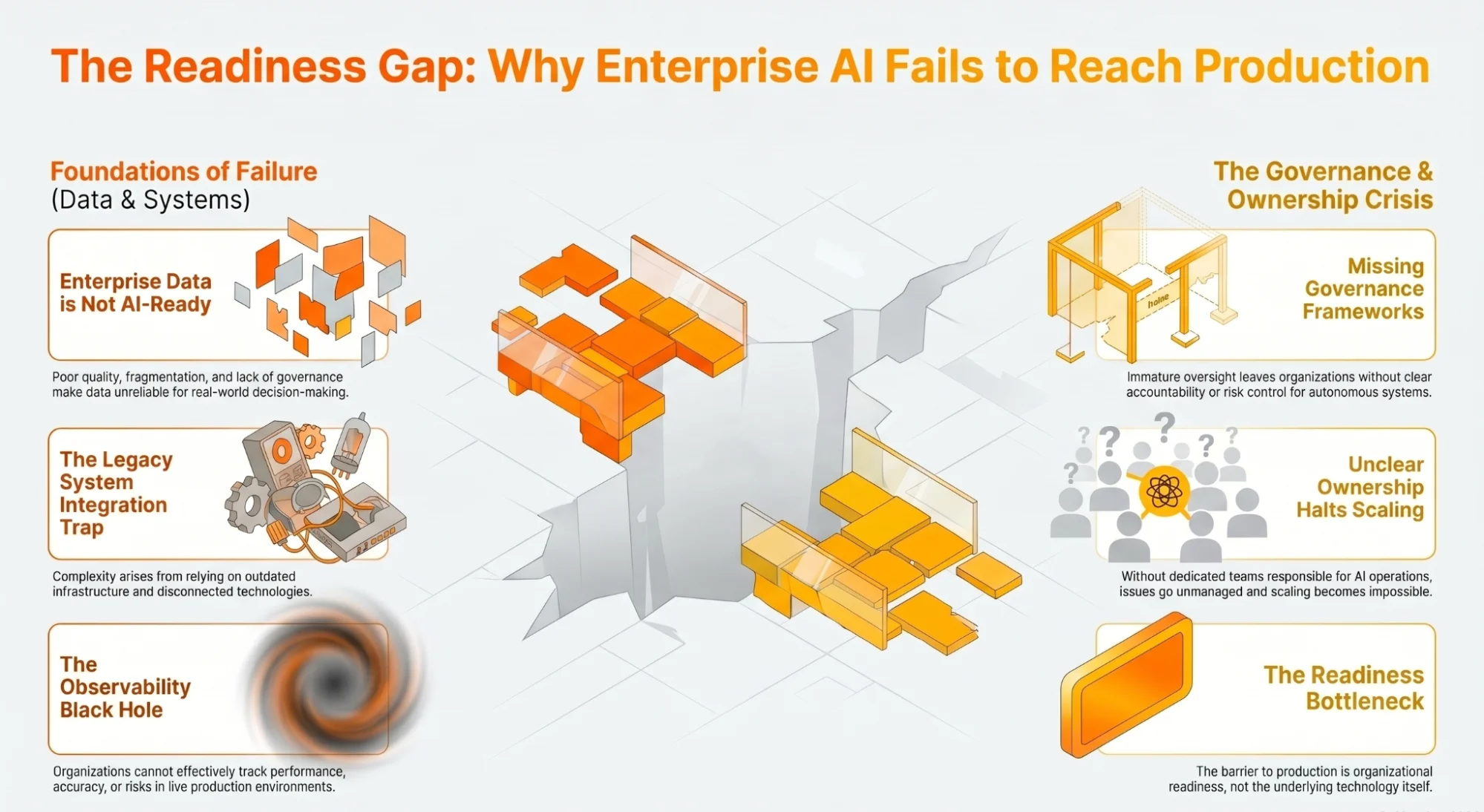

1. Data Is Not AI-Ready

The most fundamental barrier is also the least visible during the demo phase: enterprise data is not prepared for autonomous AI systems.

The IBM Institute for Business Value’s 2025 CDO study, surveying 1,700 data leaders across 19 industries, found that while 80% have begun developing datasets to train AI agents, 79% remain early in defining how to scale and govern them.

Barriers such as accessibility, completeness, integrity, accuracy, and consistency continue to prevent organizations from fully leveraging their data for AI deployment. Research aggregated from IDC, Deloitte, and McKinsey further reinforces this gap:

- 57% of organizations say their data is not AI-ready

- By 2027, poor data quality could lead to a 15% productivity loss when scaling AI

AI agents do not just use data; they act on it. They make decisions based on it. They expose every inconsistency within it. A system that performs well on clean, curated demo data will fail when exposed to the fragmented, siloed, and inconsistently governed data environments typical of large enterprises.

This challenge is especially acute in complex, data-intensive environments.

To understand how this plays out in practice, see AIChief’s analysis of AI in Supply Chain Management, where fragmentation across procurement, logistics, and inventory systems is a primary barrier to scaling beyond pilot deployments.

2. Governance Frameworks Are Absent or Immature

AI agents that can act autonomously require governance frameworks that most enterprises have not yet built. According to Deloitte’s 2026 research, only one in five organizations has a mature governance model for autonomous AI agents, even as agentic AI adoption continues to accelerate.

IBM’s Cost of a Data Breach Report 2025 highlights the scale of the gap:

- 63% of organizations lack AI governance initiatives entirely

- High levels of “shadow AI” significantly increase risk

- Data breaches cost an additional $670,000 in such environments

McKinsey’s research makes the accountability issue equally clear:

- Only 28% of organizations say the CEO owns AI governance

- Just 17% report board-level oversight

Another, McKinsey’s agentic AI security research identifies this as the central challenge. As agents evolve from passive copilots to proactive actors, the complexity they introduce is not primarily technical; it is organizational.

The real challenge lies in coordination, judgment, and trust. Organizations must establish governance over systems that can act autonomously.

The Forbes Technology Council reinforces this point: As AI agents begin coordinating tasks across workflows, governance complexity increases sharply, and organizations that treat governance as an afterthought will face compounding operational and compliance risks.

3. Legacy System Integration Is Deeply Complex

Enterprise technology environments were not built for autonomous AI agents. Most large organizations operate on a patchwork of:

- Legacy systems

- Disconnected data warehouses

- Proprietary APIs

- Decades-old infrastructure

Connecting AI agents into this environment is not a configuration task; it is a significant engineering undertaking. A March 2026 analysis of 650 enterprise technology leaders highlights the scale of this challenge:

- Integration complexity was cited in 89% of scaling failures

- Organizations using external vendors or system integrators achieved 2x higher success rates compared to those relying solely on internal builds

As IBM notes in its 2026 enterprise AI roadmap, many organizations face environments where:

- Sensitive data must be protected

- Compliance requirements are strict

- Workloads are mission-critical

These constraints require an approach that balances security, governance, and innovation. The solution is not to replace legacy systems overnight. It is to bring AI to where enterprise work already happens, through careful, governed integration, rather than forced platform migration.

4. Observability and Monitoring Infrastructure Does Not Exist

A pilot can run without monitoring. Production cannot. Research from Cleanlab’s AI in Production 2025 report, based on professionals already running agents in live environments, found that observability and evaluation are the lowest-rated components of the enterprise AI stack.

- Fewer than one in three teams are satisfied with their current capabilities

- Nearly half are actively evaluating alternatives

Investment Priority:

- 62% of production teams identify observability as their top investment area

This highlights a critical reality: The challenges that matter most in production, latency, reliability, output quality at scale, and cost, do not surface during pilots operating under limited and controlled conditions.

As IBM emphasizes in its 2026 priorities for AI and technology leaders, traditional governance approaches are no longer sufficient for agentic systems. Organizations must move beyond monitoring uptime to monitoring runtime, including:

- Accuracy

- Model drift

- Context relevance

- Cost

Design must also capture reasoning traces to ensure accountability, while independent guardrails continuously test for jailbreaks, prompt injection, and data poisoning.

Without this infrastructure in place before deployment, production agents remain untrustworthy. Organizations that deploy without it consistently face failures that damage both operations and long-term confidence in AI adoption.

5. Organizational Ownership Is Unclear

The fifth root cause is structural: nobody owns the production agent. The March 2026 survey of enterprise technology leaders found that unclear ownership is one of the most frequently cited causes of AI scaling failure.

It is because when ownership is unclear, monitoring gaps remain unfilled. When monitoring gaps exist, quality issues go undetected. Over time, these issues compound into full production failures.

Organizations that successfully crossed the pilot-to-production gap shared one defining structural practice: They established a dedicated AI operations function, distinct from both IT and business units, responsible for evaluation frameworks, production monitoring, and incident response.

By contrast, organizations that distributed this responsibility across existing teams consistently failed to scale. This aligns with McKinsey’s observation: Organizations are very good at running AI projects, but far fewer know how to turn those projects into a new operating baseline.

The transition from AI-aware to AI-native requires treating governance as infrastructure, building risk management, auditing, and oversight capabilities before they are urgently needed.

The "Pilot Purgatory" Phenomenon

One of the most consequential patterns in enterprise AI is what McKinsey researchers describe as the “pilot loop,” a cycle where organizations launch multiple proofs-of-concept, generate encouraging results, and then fail to transition any of them into sustained production. The data makes the pattern clear:

- 88% of AI proofs-of-concept never reach production

- Only 4 out of 33 make it to deployment

- Since 2023, just 25% of AI initiatives have delivered expected ROI

The result is a widening competitive divide. Organizations that industrialize a small number of high-value agentic use cases will pull ahead, while others remain trapped in an endless cycle of pilots. The pilot loop is not a failure of ambition or technology. It is a failure of organizational architecture. Pilots are typically:

- Isolated from core systems

- Under-resourced for scale

- Evaluated against criteria that don’t reflect production realities

When a pilot “succeeds,” the path to production requires entirely different capabilities, including data governance at scale, integration engineering, monitoring infrastructure, security controls, and change management.

None of these are built during the pilot phase. Deloitte’s 2026 enterprise research identifies the AI skills gap as the single biggest barrier to integration. However, skills alone are not enough.

Only 34% of organizations are truly reimagining how their business operates, while the majority continue to layer AI onto existing processes without addressing the structural and governance gaps that prevent production deployment.

For a practical look at how AI agents are being deployed successfully across high-complexity enterprise environments today, and which platforms are enabling that deployment, explore our curated review of enterprise AI agent platforms currently being adopted by forward-looking organizations.

What Separates Organizations That Successfully Deploy

Despite the systemic challenges, a minority of organizations have successfully crossed the production threshold. Their practices offer a clear blueprint.

1. Start Narrow, Then Expand

Successful deployments begin with agents scoped to a single, well-defined task with measurable outputs, such as a document classifier, a data enrichment pipeline, or a routing agent.

Agents designed for broad, open-ended tasks consistently fail at scale due to compounding quality variance and untestable edge cases. Expansion only happens after the initial system proves stable, typically over 90+ consecutive days.

2. Treat Governance as a Prerequisite

Organizations that build risk management, auditing, and oversight capabilities before scaling AI deployments move faster than those that treat governance as a follow-on activity.

McKinsey’s research is detailed: Organizations that scale AI safely are the ones that scale it fastest.

3. Create Dedicated AI Operations Ownership

AI agents in production require continuous management, including feedback, correction, accountability, and evaluation. Organizations that succeed treat agents like digital employees:

- Onboarding them

- Monitoring them

- Managing them with clear accountability

Those that deploy agents without ownership structures consistently experience performance degradation over time.

4. Invest in Data Readiness First

Research from the IBM Institute for Business Value shows that organizations investing in data quality, governance, and accessibility before scaling achieve significantly higher success rates.

Those who attempt to fix data issues after deployment failures begin rarely succeed.

5. Align Autonomy with Risk

Successful organizations limit agent autonomy in early deployments and expand it gradually as monitoring capabilities and operational confidence improve. This phased approach reduces the risk of high-impact failures while enabling safe and scalable growth.

Across all successful deployments, one pattern stands out. They treat AI agents not as tools to test, but as systems to operate.

The Security Dimension: An Underappreciated Risk

As AI agents move toward production, security becomes a first-order challenge that most organizations are not adequately prepared to address. IBM’s cybersecurity predictions for 2026 identify agentic AI as introducing an entirely new enterprise risk.

Autonomous AI agents operate across departments, systems, and datasets. A single workflow may involve accessing sensitive records, triggering downstream actions, and coordinating with other agents. While each action may be permissible in isolation, their combined effect can introduce significant risk. The scale of this risk is already visible.

- 80% of organizations report encountering risky AI agent behaviors

- Common issues include improper data exposure and unauthorized system access

IBM’s analysis classifies AI agents as “digital insiders,” entities operating within enterprise systems with varying levels of privilege and authority. These agents can cause harm unintentionally through poor alignment or deliberately if compromised.

McKinsey’s agentic AI security research highlights an additional layer of complexity: agent-to-agent interaction. As multi-agent ecosystems expand, organizations must ensure that inter-agent communication is authenticated, logged, and permissioned.

Protocols designed to manage these interactions, including Anthropic’s Model Context Protocol, IBM’s Agent Communication Protocol, Google’s Agent2Agent protocol, and Cisco’s Agent Connect Protocol, are not yet fully mature.

The implication is clear: The security gap is not a reason to avoid agentic AI. It is a reason to invest in governance and security infrastructure before deployment.

The Road Ahead: 2026 and Beyond

The trajectory of enterprise AI agent adoption is clear.

- Gartner projects that 33% of enterprise software applications will include agentic AI by 2028, up from less than 1% in 2024.

- IDC estimates that by 2027, 63.6% of organizations will have launched at least ten AI agents

This represents a rapid shift from isolated experimentation to scaled deployment. Kate Blair of IBM reinforces this timeline: If 2025 was the year of the agent, 2026 is expected to be the year when multi-agent systems move into production.

However, that transition depends on the operational and governance foundations that most organizations are still in the process of building. Bernard Marr frames this as a defining moment for enterprise strategy.

Organizations are entering a new phase where AI agents automate entire workflows, while emerging risks, including quantum-era threats, shift from theoretical to practical concerns.

The stakes for organizations that fail to build operational readiness are no longer abstract. McKinsey & Company frames the challenge in equally direct terms: The next chapter of enterprise AI will not be defined by those with the most advanced technology, but by those with the discipline to fundamentally reimagine how they operate.

The transition from AI-aware to AI-native is the defining organizational challenge of 2026. It requires moving AI from the edge of workflows into the core of how a company decides, executes, and learns.

The implication is clear: The era of experimentation is ending. The era of operationalization has begun. As McKinsey notes, making that shift is ultimately a leadership decision, one that only the CEO can drive.

Conclusion: The Gap Is Organizational, Not Technological

The AI agent production gap is real, widespread, and consequential. But it is not inevitable. The models are capable. The tooling is maturing. The business case, spanning customer operations, supply chain management, software engineering, financial services, and beyond, is already well established.

McKinsey & Company estimates that agentic AI could unlock $2.6 to $4.4 trillion annually across more than 60 enterprise use cases. This value is not speculative. It is achievable for organizations that treat deployment readiness with the same rigor as AI capability.

According to AIChief’s editorial analysis, closing the production gap requires five foundational investments:

- AI-ready data

- Mature governance frameworks

- Legacy system integration strategy

- Production-grade observability

- Dedicated organizational ownership

Organizations that build these foundations before scaling will not only deploy more reliably, but they will deploy faster, and they will outpace competitors still trapped in the pilot loop. The takeaway is simple: The demo was never the destination. Production is.

Avalon Brooks

Avalon Brooks is a tech writer who genuinely gets excited about new tools, especially anything involving AI. She spends her time exploring and testing the latest tech so others don’t have to guess what’s worth their time. Avalon has a way of explaining complicated ideas in a friendly, down‑to‑earth way that feels like a chat with someone who actually gets it.